Problem/Motivation

I've been looking into using Drupal with millions of nodes. One problem is that the admin/settings/search page is very slow. This tends to cause CPU quota errors on shared hosting.

The query causing the problem is this one:

SELECT COUNT(*) FROM node n LEFT JOIN search_dataset d ON d.type = 'node' AND d.sid = n.nid WHERE n.status = 1 AND (d.sid IS NULL OR d.reindex <> 0)

which has roughly the same problems as the query in node_update_index(), and could be fixed with a similar approach.

Proposed resolution

When a node is added to Drupal, add an entry to the search dataset so that we can avoid this join and the NULL thing and just look for d.reindex <> 0 to find the status.

Also may need to set the d.reindex value to something that will trigger new nodes to be indexed first in NodeSearch::updateIndex, like a value of 1, and the code in that function can also be simplified so it does not have to consider NULL entries.

Remaining tasks

Make a patch.

User interface changes

Faster cron and search status page loading.

API changes

Not really.

| Comment | File | Size | Author |

|---|---|---|---|

| #53 | queries_on_search_admin-312395-53.patch | 10.4 KB | manuel garcia |

| #47 | explain2.png | 15.66 KB | jhodgdon |

| #47 | explain.png | 15.28 KB | jhodgdon |

| #41 | interdiff-312395-34-41.txt | 8.61 KB | travis-bradbury |

| #41 | indexing-slow-on-many-node-sites-312395-41.patch | 11.2 KB | travis-bradbury |

Comments

Comment #1

robertdouglass commentedSub.

Comment #2

slantview commentedHave you tried converting your tables to INNODB ? Just wondering if there would be any performance gain doing that.

Comment #3

Wesley Tanaka commentedI haven't tried INNODB

Comment #4

caole261188 commentedsubscribe

Comment #5

jhodgdonThis needs to be fixed in Drupal 7 first, then backported to Drupal 6.

Comment #6

jhodgdonI looked into this for Drupal 7, where the path is now admin/config/search/settings -- just to see what it was doing and verify it's the same in D7... (it is):

In both D6 and D7, the respective path's page callback is search_admin_settings(), and the offending queries are called from code that looks like this in D7 (very similar in D6 except the active modules bit and it's hook_search($op = 'status') instead of hook_search_status()):

node_search_status() [D7] or node_search($op='status') [D6] contains the slow query.

Comment #7

jhodgdonSo there are a couple of things to consider here:

a) We'll probably be removing the WHERE -- see #239196: Indexing status shown on search settings page is incorrect

b) There is no index in the node table on field status -- there are just a couple of combined ones that include status and some other fields. Not sure why?

c) The only index in search.dataset is the primary key (combination of sid and type).

Maybe we could/should add indexes:

- status field for node

- sid, reindex, type for search_dataset (three different indexes)

I don't know enough about indexes and databases to know whether this would be a good idea or not?

Comment #8

jhodgdonI've just closed #312393: Performance: node_update_index() slow with large numbers of nodes as a duplicate of this issue, because it's pointing out that nearly the same query that happens during node_update_index() is also slow. The query in question is:

SELECT n.nid FROM node n LEFT JOIN search_dataset d ON d.type = 'node' AND d.sid = n.nid WHERE d.sid IS NULL OR d.reindex <> 0 ORDER BY d.reindex ASC, n.nid ASC LIMIT 0, 100;

This is the same query as above except that the one mentioned here for the Search admin page counts the entries, and this query finds the first N to index.

Comment #9

jhodgdonadding tags

Comment #10

damien tournoud commentedCounting will ever be slow, even if you add an index, as long as the dataset you are counting is large.

Those query do two things: select (or count the number of) nodes marked as needing reindexing (in search_dataset), select (or count the number of) nodes that have never been indexed (ie. are not yet in search_dataset).

There is only one real way to improve that, it is to make sure that every node has an entry in search_dataset (as identified by Wesley Tanaka in the other issue). This way you can limit the query to one table, and you can add the indexes to make it reasonably fast. This is basically what the Apachesolr integration module does.

Comment #11

jhodgdonReally, there is only one way to improve it? I was under the impression that adding indices to database tables generally improves speed, even if there are joins involved? But I'm not any kind of expert in database optimization...

Comment #12

damien tournoud commented@jhodgdon: in the current situation, the table scan cannot be avoided: we ask the database to list all the rows in {node} that doesn't have an entry in {search_dataset}. The only way to do that is to scan {node}, lookup the value in {search_dataset} ([sid, type] is a primary key, so the database engine will use that) and return the row if no value is found. No index can improve the situation here. Adding a random index will only do one thing: reduce the insert performance.

Comment #13

geerlingguy commentedSubscribe.

Comment #14

jhodgdonDamien Tournoud: Thanks for the explanation... I thought that maybe having those indexes might help with the WHERE part of the operation, but I see your point that the main slowdown is in the join-with-nulls.

So.... Back to the idea of having the node module add each node to the search dataset table when it's first created....

Let's see.

node_update_index() currently finds nodes either never added to {search_dataset} or with their {search_dataset}.reindex bit set to something non-zero, and orders them ascending by .reindex. search_node_insert() and other functions that call search_touch_node() to indicate "this node needs a reindex" are currently putting REQUEST_TIME in .reindex whenever a node is edited.

So if during node creation, the node was added to search_dataset with .reindex=1, that could indicate "new node, high priority for reindex". The way to do that would be to make a search_node_update() function -- i.e. use hook_node_insert().

We would also need to modify the query in node_search_status(), where it calculates $remaining, to remove the join and make it look for .reindex > 1 instead of NULL or non-zero.

That should work, I think?

Comment #15

jhodgdonHere's a separate issue that I noticed while thinking about this issue:

#735154: search_touch_node()/search_mark_for_reindex() should not update if already touched

Comment #16

dawehnerOne big performance improvement can be done if the ORDER BY criterias can be dropped.

At least the node.nid part isn't required from my perspective.

The reindex orderning itself makes sense.

Comment #17

sun.core commentedComment #18

mgiffordIs this still likely to be an issue in D8?

Comment #19

jhodgdonAs far as I know, the queries are identical in Drupal 8.x, so yes.

Comment #20

jhodgdonWe probably need to do this in 8 and it should be easy... maybe a good Novice issue?

Comment #21

julfabre commentedOk, I'm at Drupal Dev Day's and I take it !

Comment #22

travis-bradbury commentedHere's a start.

Before the patch nodes are identified for indexing by existing in the node table but not in search_dataset or existing in search_dataset with a non-zero reindex value.

This patch saves all new nodes to search_dataset with reindex=1. Queries to find nodes requiring indexing now use only the search_dataset table.

Behavior before the patch:

Created two new nodes.

/admin/config/search/pages indicates the status of nodes indexed (eg: 0 of 2 items indexed)

Ran cron.

Confirmed nodes with a non-zero reindex value have been indexed (now have data in search_dataset and reindex is 0).

/admin/config/search/pages now indicates all items have been indexed.

After the patch:

Created two new nodes.

/admin/config/search/pages indicates the status of nodes indexed (eg: 2 of 4 items indexed).

Ran cron.

Confirmed nodes with a non-zero reindex value have been indexed (now have data in search_dataset and reindex is 0).

/admin/config/search/pages now indicates all items have been indexed.

Comment #23

jhodgdonThanks very much for the patch!

Don't forget to (a) assign the issue to yourself and (b) set the status to "needs review" when you upload a patch file.

So... This looks pretty reasonable. I'll set it to Needs Review to see if the tests fail. Then if that works, there are a couple of nitpicks to take care of with the patch in code/comments.

Comment #24

jhodgdonCosmetic and coding standards things to fix up in this patch:

Comments need to be sentences. The text is Ok but needs to start with capital letter and end with . -- this will probably make the line longer than 80 characters, so it will need to wrap.

we don't use comments on the ends of lines.

I guess I need to point you to the comment standards:

https://www.drupal.org/node/1354#inline

I think we usually put operators on the ends of lines, not the beginning of the next line? Check the rest of Core and see.

Or better yet just make a longer line with the whole "" in one line. We do not have an 80-character restriction on code lines, only comments.

See previous comment.

Comment #25

jhodgdonAlso, since this is a Performance issue, I think it would be helpful if we could see how well this query performs under high volume.

Comment #27

jhodgdonThat seems to have been a general bot fail, retesting so we can see what the actual issues might be. Still Needs Work for #24

Comment #29

jhodgdonComment #31

travis-bradbury commentedFailing test might still be testbot (

Setup environment: failed to clear checkout directory.) but I'll submit a new patch rather than retest because I cleaned up the nitpicks from #24.For performance, I'm seeing significant improvement on my test site with 18200 nodes.

mysql's

benchmarkfunction before/after removing the join.I also saw an improvement in time per request using

ab(some information removed from output for brevity).Edit:

Forgot to mention an issue - nodes that are created but weren't indexed before applying the patch (ie, cron hasn't run yet or has run but didn't index everything before reaching its limit) will no longer be detected as requiring indexing.

Comment #32

travis-bradbury commentedSummoning testbot.

Edit: Okay, there is definitely a major problem with the patch. At first glance, I think a lot of tests are failing because they try and create a new node and it fails because there is no simpletest12345search_dataset table.

Comment #34

travis-bradbury commentedLocally, tests are passing if I require the search module in test classes or check that the search module is installed before trying to insert into {search_dataset}. I changed the patch to do the latter.

I'm not sure that it's the best solution. Would it be better to make a larger change, such as below?

a) Create a function similar to node_reindex_node_search that does the check for the search module and calls a function from the search module similar to search_mark_for_reindex.

b) Create functions to replace node_reindex_node_search and search_mark_for_reindex where the new functions update search_dataset.reindex where the node already exists or create a new entry in search_dataset where necessary.

Of the two, I think I'd prefer a) because it leaves detection of an update (versus a new node) to the node module, which already has

if ($update).That would still leave us with the problem of not-yet-indexed nodes no longer being detected for indexing after the patch is applied.

Comment #36

jhodgdonSo... You know, the more I think about it, the more I think this approach is not going to work.

What happens if you have a site up and have some nodes in it already, and then you enable the (patched) Search module? The nodes that predate the Search module are not going to be in the index, and I think they'll never be found for indexing with the new queries, right?

Comment #37

travis-bradbury commentedI've had a look at the last tests that failed.

\Drupal\search\Tests\SearchMultilingualEntityTesthas a few tests that look for how many rows are in search_dataset to test indexing. The tests are wrong after the patch changes the when nodes are added to search_dataset. Fixing them should be straight forward.You're right. I'm not sure how to solve that. The only way I can see to guarantee every node has been indexed is to check both {node} with {search_dataset} and look for nodes not in {search_dataset}, which is exactly what we started with. Is there another approach?

Comment #38

travis-bradbury commentedRegarding existing sites enabling the search module, what if we added all nodes to search_dataset during install of the search module? For each row in {node} we'd insert a row into {search_dataset}.

Enabling search suddenly on a site that already has many nodes should be less work than the original query (joining tables and looking for nulls), right?

Comment #39

travis-bradbury commentedI was playing around and assuming others would be on board with my suggestion in #38, it looks like it'd be reasonably quick.

This statement inserts a row into search_dataset for every node and it could be used in search's hook_install().

I'm sure that the performance of 1.16 seconds for 18,000 nodes would vary a lot in other environments, but even a extra few seconds to enable a module seems fair to me. If you're enabling search in the middle of a site that already has tens of thousands of nodes - or more - you've got bigger problems to worry about, like actually getting them all indexed, right?

Comment #40

jhodgdonI think that is a fine idea. We would need to make sure the INSERT was using ANSI SQL syntax so that it would work in all databases. We would also need a test for this new behavior that would create a couple of nodes, then enable the Search module, run indexing (you can see how other tests are doing that part), and verify those nodes show up in search results. As a bonus you could test the search status just after enabling Search (should say 2 items to index or however many nodes you made) and after indexing (should say 100%).

Great progress, thanks tbradbury!

Comment #41

travis-bradbury commentedThe insert with select statement syntax used in search.install is supported by sqlite and PostgreSQL. I tested it with SQLite 2.8.17 to be sure and I understand that Drupal requires 3.6.8 (up from 3.3) so I think we should be good for compatibility.

Comment #43

jhodgdonI'm a bit worried about this solution. We've had an expectation since Drupal Ancient History that the search index tables were data that could be recreated:

- If a site's search database seems corrupted (which can happen), the usual solution you'd find all over our docs and the Internet is "Clear out these search database tables and run cron until they're all created again". This would no longer be possible, because it would never be recreated properly.

- The default settings for modules like Backup and Migrate is to create but not populate the search tables, for the same reason -- because their data can be recreated (or at least it could be until this patch).

- We have an issue out requesting a feature of a Clear Index button, to get rid of corruption.

- Etc.

So I am not all that comfortable with modifying that expectation, as this patch does.

But I had an idea: instead of doing this in search_install(), which is a one-time thing and really it shouldn't be assuming knowledge of the NodeSearch plugin anyway... What if we instead put a similar query at the beginning of each search indexing run? It might slow down the indexing again, but it would at least make it so that on the search admin page, the query could be faster as in this patch.

Then we could also put something on the screen that says that counts of completeness are only accurate as of the last cron run.

Thoughts?

Comment #44

jhodgdonAlso one other point on this:

It is entirely possible (and even likely) to enable the core Search module but not enable/define any NodeSearch pages (you could be using a contrib module that defines its own search type). In this case, without this patch, nodes would never be added to the node_search index, because the indexing methods on NodeSearch are not called if there is no NodeSearch page defined.

So this patch, as it is, would slow down every node save operation by adding an unnecessary entry to the search database, and also would grow the search database unnecessarily, since it really shouldn't have any nodes in it at all in this case.

So... really I think we just need to do what I suggested in #43: at the beginning of search indexing in each cron run, add nodes so that the queries don't have to do the NULL check.

Comment #45

travis-bradbury commentedI'm definitely on board with not changing the expectation that the search index can be wiped out and re-created. The patch relies on all nodes being added on creation of on install of the search module, which is definitely more fragile for the reasons you pointed out.

So, we're looking at:

Are we left with the original slow query for each cron run? A reasonably quick way to update {search_dataset} with new nodes is

INSERT IGNORE INTO search_dataset (sid, langcode, reindex, type, data) SELECT nid, langcode, 1, 'node_search', '' FROM node. On my test, it took 0.16s to add 150 new rows to {search_dataset} where {node} had 18350 rows (versus 1.22 seconds to take an empty {search_dataset} and copy every id from {node} into it). The drawback to INSERT IGNORE is that it ignores more than just duplicate keys. I'm not sure the other ignored cases are a concern to us, but we'd also need to worry about other databases (MySQL/MariaDB usesINSERT IGNOREbut sqlite appears to useINSERT OR IGNORE).Do we still have people complaining of poor performance on these pages? I've seen issues similar to this one created around 2008 and Wesley Tanaka's blog mentioned in the issue summary which has a number of comments from people having major issues (queries too slow to finish before the connection times out - and they didn't have an astronomical number of nodes). If people still run into real-world problems it'd be interesting to know what their environment is. So far, I haven't really been able to replicate it. With 18,000 nodes on my test site I saw a pretty good increase in time to load the admin page (comment 31, 240ms before/140ms after) but I'm not seeing anything like the 60-second queries that people were describing years ago.

Next, I'll try and put together a patch that speeds up the admin page, leaves cron with the slow-but-reliable query, and tests that the admin page's index status is correct.

Comment #46

jhodgdonHm, I'm confused...

I think in order to have the admin page even be correct as of the last cron run, the first thing we need to do in each cron run is make sure there's an entry in search_dataset for every missing node. Right?

I don't think we want to do an INSERT IGNORE though... that could (as you point out) cause other problems.

So let's think. The slow query in the node update search index function that was reported on the other issue marked as a duplicate of this one (#312393: Performance: node_update_index() slow with large numbers of nodes) was:

SELECT n.nid FROM node n LEFT JOIN search_dataset d ON d.type = 'node' AND d.sid = n.nid WHERE d.sid IS NULL OR d.reindex <> 0 ORDER BY d.reindex ASC, n.nid ASC LIMIT 0, 100;

And the reporter said it was slow because it "... does a full table scan on the {node} table, and also has the painful "Using temporary; Using filesort" "

But to do the insert, we don't need the ORDER BY, we would just need something like (probably have the syntax wrong, but something like this):

INSERT INTO search_dataset FROM SELECT n.nid as sid, 'node_search' as type, 1 as reindex FROM node n LEFT JOIN search_dataset ON d.type = 'node_search' AND d.sid = n.nid WHERE d.sid IS NULL

That doesn't seem like it would be as bad as the original query, but maybe... probably would need to do an EXPLAIN on it to see what it does (after fixing my likely wrong syntax)...

Also as a note, these problems were reported on sites with millions of nodes, not thousands.

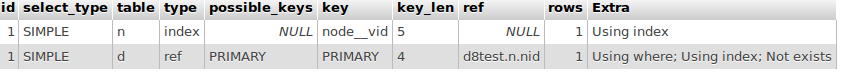

Comment #47

jhodgdonI did an explain on the SELECT part of this query (note: it had a small typo):

It looks pretty good:

As opposed to the query we're trying to get rid of:

So that seems like a good approach.

@tbradbury, do you still want to work on this?

Comment #48

travis-bradbury commentedSorry for being absent. I'm still happy to work on it. I'm a bit short on time but I should be able to create another patch in a couple days.

Comment #49

jhodgdonGreat, thanks! I've been rather busy lately too -- anyway, no need to apologize. Your help, whenever it comes, is much appreciated. :)

Comment #52

michaellenahan commentedI looked at this at the novice issues triage at Dublin2016. Might be a good issue for someone new to Drupal but with database knowledge. One thing to consider is if a database update is required.

Comment #53

manuel garcia commentedRerrolled #41.

Manually Fixed conflict on

core/modules/node/src/Plugin/Search/NodeSearch.php-updateIndex()now looks to already have the change intended in the previous patch, so I have not made any changes to that method. Needs review ;)Comment #65

kim.pepperReviewed as part of the Bugsmash initiative. As this is a performance optimisation, I believe we can re-classify this as a task.

Also, after a quick scan of the patch in #53 I think the implementation needs to happen in the Search module, not Node.

Comment #66

kim.pepperComment #70

quietone commentedThere doesn't seem to be novice type work here right now.

Comment #71

hablat commentedThis issue is also triggered when visiting normal /admin/config page and causes timeout on sites with lots of nodes. See

https://www.drupal.org/project/drupal/issues/3336621#comment-16067058

Comment #73

quietone commentedThe Search Module was approved for removal in #3476883: [Policy, no patch] Move Search module to contrib .

This is Postponed. The status is set according to two policies. The Remove a core extension and move it to a contributed project and the Extensions approved for removal policies.

The deprecation work is in #3565780: [meta] Tasks to deprecate the Search module and the removal work in #3565783: [meta] Tasks to remove the Search module.

Search will be moved to a contributed project before Drupal 12.0.0 is released.